Why AHP works for Prioritization

Updated:

Published:

.png?width=223&height=223&name=Untitled%20design%20(1).png) The Analytic Hierarchy Process (AHP) is a Decision Science solution ideally suited to prioritizing complex project portfolios. So whether your current project selection tool is a Magic Spreadsheet or a Magic Eightball, we believe AHP will improve performance.

The Analytic Hierarchy Process (AHP) is a Decision Science solution ideally suited to prioritizing complex project portfolios. So whether your current project selection tool is a Magic Spreadsheet or a Magic Eightball, we believe AHP will improve performance.

AHP enables you to turn strategy into a model

Analytic Hierarchy Process is the solution you need to form a scalable bridge between your strategy and your execution. It is a mechanism for decomposing your goals into discrete, often competing, components that cover the rationale of your portfolio.

Don't take our word for it. Researchers at the University of New South Wales looked at over 100 methodologies for prioritizing projects and AHP came out as one of only 2 "suitable" methods for large organizations.

So what makes it so good? Well, it comes back to resolving that tension between business drivers. For example, you may have ‘growth’ set against ‘cost control’, or ‘environmental responsibility’ versus ‘short term profit’. That’s fine – it’s how the real-world works. But good planning needs to be able to turn this paradox into a quantifiable framework. Which means determining the relative importance of competing goals. To do this we use an approach called Pairwise.

Pairwise is the best methodology for determining relative importance

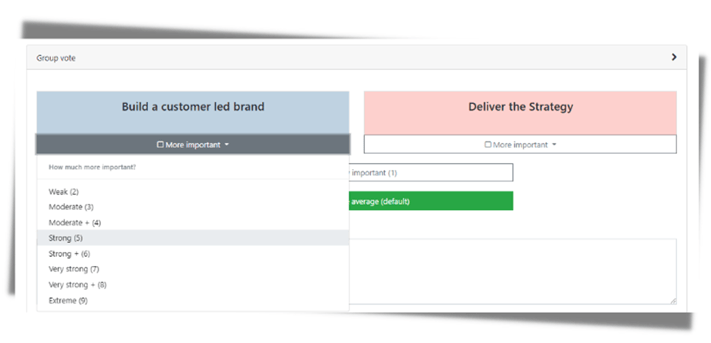

Pairwise is very simple for folks taking a survey: which of these competing goals matters more, and by how much? Research has proven that people don’t work well with determining abstract weights (that criteria should be 23.7%!). However, they are good at relative judgements – “A is a little more important than B, but a lot more important than C”. This seems simple but it’s based on extensive research that shows that people work better with a verbal scale than with abstract values.

It enables you to create user-friendly questions which look like this:

Less simple is the maths used to build the model, including an inconsistency algorithm which quantifies the extent to which you disagree with yourself and a normalization calculation that turns your preferences into a clear actionable model. But don't worry: software can deliver this without having to worry about the technical details.

The key to Pairwise is that it forces you to make choices. Your strategy probably doesn’t differentiate between the elements that keep the CEO up at night, and the elements that got shoe-horned in because every director needed a couple of bullet points to call their own. That means it will fail as the base for a prioritization model because it’s not making the hard choices, and if everything is important… then nothing is important.

Structuring Criteria creates a measurable bridge between strategy and projects

The “H” in AHP stands for Hierarchy. For example, breaking your model into Productivity vs. Environment is a start, but neither of these goals is specific enough to be measurable, so add layers (‘sub-criteria’) beneath. Perhaps “Productivity” is best defined as types of saving (headcount vs materials for example) or by channel (call center vs sales). This means you get the granularity needed for measurement, connected to the high-level trade-offs that define a good strategy. Similarly, “Environment” could be expressed as impact on Emissions, Water Usage and Recycling (for example) as more specific benefits against which different environmental projects will be measured.

As above, you then apply pairwise to weight these competing branches in the model, so you continue to make practical trade-offs between competing objectives.

For each sub-criteria you build scales to make sub-criteria quantifiable. Typically, these scales start with an ‘ideal’ outcome (where the sub-criterion is fully satisfied), then step down through less good choices. There’s no fixed rule but having five options works well. It’s very similar to a Likert scale which you’d have seen in psychometric questionnaires (“Do you... Strongly Agree / Agree / Neutral etc.”)

Psychology research shows the value of structured collaboration

There is extensive research which demonstrates why prioritization should be approached as a group through a staged review process. While these benefits are not exclusive to AHP, it is ideally suited to delivering such a process. Here’s why:

Groups are better than individuals

Individual people make random mistakes – this is called Noise. This phenomenon is beautifully shown by Daniel Kahneman’s book on the subject (this article is a good summary of key points if you’re short on time). In a nutshell, people are… human. They are often ‘wrong’ in their judgements. It might be because they have knowledge gaps. It might be because it’s late and they’re tired, or their sports team lost a big game last night. The key point is that a single person’s judgement is flawed. However, combine it with others’ perspectives and (statistically speaking) mistakes even out to produce better data. Read more about Noise in Decision-Making.

Put another way, let’s think about “The Wisdom of the Crowd” a concept devised by Francis Galton, the founder of psychometrics. If you’re guessing the number of sweets in the jar at the local fair and really want to win… then don’t guess. Wait until everyone else has made their judgement, calculate the average of their estimates, and bingo, you’ll win the candy. Syndicated knowledge is more powerful than an individual point of view.

Applying this to a corporate planning process, it could simply mean having three people score a project, rather than one, thus eliminating 50% of the Noise from the data they produce. So don’t let the CEO arbitrarily determine pairwise judgements alone; make sure she’s part of a team so her colleagues can balance out the inevitable limitations in her judgement.

Create a staged process to improve data quality

Getting everyone into a room to vote might seem like the obvious solution to deliver collaboration, but it is not the best option. There are three main reasons - Group Think, Anchoring and Consistency.

Group Think is a concept first highlighted by Solomon Asch. He demonstrated that people have a proven tendency to follow the herd, even when their own eyes tell them otherwise. This was either because they want to fit into the group, or because they doubt their own judgement in the face of others’ opinions. Watch this to learn more.

Anchoring is one of many cognitive biases that can explain poor decision making. It happens when one person speaks first to direct (or potentially misdirect) the group by creating a reference point to which all subsequent options are compared. If that person is loud or senior that effect may prove irreversible, as the discussion builds on a flawed initial judgement. Check out our own experiment with anchoring here.

A key feature of pairwise is the ability to measure consistency. Because you are comparing all options at each level of the hierarchy, there is potential for you to disagree with yourself. Every rating on the Saaty scale used for AHP implies a ratio of relative importance. The extent to which those ratios do not align can be calculated with an algorithm and fed back to users as a flag to highlight where their judgements don’t make logical sense. Think of this in terms of basic maths. If, A=2B, B=2C, then A should equal 4C. If it does not, then there is inconsistency. From a practical perspective this happens all the time, so an iterative process to re-score ‘wrong’ answers is highly recommended. Read more about inconsistency here.

To overcome these problems is simple. Ask respondents to complete a pairwise survey on their own. Take time to reflect, articulate potentially controversial points and validate the mathematical consistency of their scores. Only then get the group together, ideally with an objective facilitator skilled at using the data to structure an inclusive debate. This means everyone’s opinion is considered and visible. It enables people to learn from one another and to debate about points of divergence. This approach also has the benefit of saving time, as areas of alignment can pass untouched. After all, having a nice chat about consensus topics is low value add, unless the meeting has really good biscuits.

Better Buy-In drives better outcomes

A well-structured inclusive pairwise review will build buy-in to the model it generates. There are three reasons:

Firstly, if stakeholders are included in the process and see it was well structured and based on objective best practice, then they are more likely to see it as ‘fair’. Subsequently, they are less likely to challenge when they are told “no”. This is about building a culture of high-quality planning, rather than accepting back-channel politics as a way things get done.

Secondly, if people feel they have been heard, then they have more buy-in. Even if they don’t get others to change their voting, they’ve still got confidence that the system is considering things from their perspective, and that their interests are influencing the overall model.

Finally, it improves motivation. Teams picking up the work will perform better if they are confident that they are assigned to the right projects – don't just take our word from it, check out his video from Stanford. A well-built pairwise model helps deliver this engagement, as does participation in the scoring process that follows it (check out this webinar for more on scoring projects). Adam Grant captures this nicely:

“Often our productivity struggles are caused not by a lack of efficiency, but a lack of motivation,”

Put another way, don’t just fixate on ‘resources’ as a number in excel. They are a people who will deliver more if they have belief in the task assigned to them.

Research says AHP is the best solution for Prioritization

If this sounds good… it’s because it is. AHP has been proven over decades, with research by the University of New South Wales identifying it as one of the two best models for prioritization. They analyzed over 100 MCDM (Multi-Criteria Decision Making) techniques and chose AHP and DEA as the most suitable approaches. We found DEA to be difficult to both understand and implement, so decided to make AHP our preferred approach for building a solution for commercial applications.

Therefore it’s no surprise that further research from the same institution identifies AHP as a critical component of best practice for Australian public investment, when it comes to delivering Value for Money to taxpayers. Looking at three real world ‘mega projects’ they show how AHP works alongside financial modelling such as ROI to provide a rounded view of how to prioritize.

Want to learn more? Watch this Webinar:

We haven’t found a better alternative

Here at TransparentChoice we want to help make the world more rational by unlocking the power of our clients’ awesome-ness. We chose to do that with AHP because it’s the best tool for the job. Here’s why we’re less keen on the alternatives:

Consider ‘traditional’ financial models that generate an ROI or NPV for your project. They are great if your criteria are purely financial, and you have reliable projections of value creation. But in the real world how often is this true? There are four issues we see time and again.

Firstly, qualitative judgements matter but don’t fit a financial model. Criteria such as stakeholder impact, risk reduction and customer service are impossible to quantify without massive guesses.

Secondly, early-stage projects often lack the detail needed to forecast with credible accuracy, so modelling 5-year cash flow projections has about as much use as that magic eight ball, but with considerably more effort.

Thirdly, ROI-type models tend to be somewhat ‘black-box’, with a lack of transparency on how they worked out your project was great / not great. Not great for building buy-in.

Finally, a well-built AHP model can incorporate financial metrics as one of the criteria, so there’s no need to choose between financial ROI and AHP. AHP is simply an evolution of the traditional approach to working out an ROI, but your definition of “return” incorporates both financial non-financial criteria.

The world of Product development has long recognized the value of prioritization and has come up with many methodologies to solve it, such as MoSCow or RICE. However, they tend to use narrow product prioritization criteria, which are simply not suitable for project prioritization. They lack the flexibility to factor in broader strategic considerations and cannot knit together product backlogs to work happening in other teams. As such they tend to entrench silos with “Tech” and “The Business” rather than driving alignment.

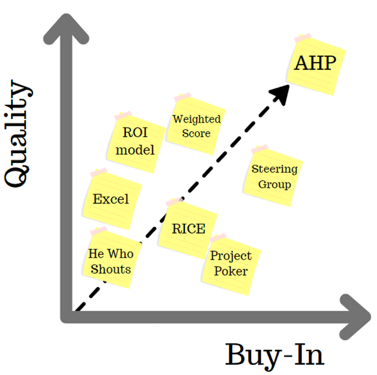

The traditional spreadsheet hack of “Weighted Scores” offers more flexibility, and, as the name suggests, it is like AHP, but skips the decision science discipline of pairwise, and the collaborative approach to model building. Conversely Project Poker is fun for teamwork with a small, tactical development team, but is far too simplistic for prioritizing a portfolio with multiple drivers and competing criteria. We could go on. There are a lot of choices out there. This blog is great if you want to learn more.

Or simply go old school, ignore prioritization and muddle along with ‘he who shouts loudest’, probably with a side of spreadsheet. If you’re a small group with a focused goal this may work. But if you’re a regular organization with bickering divisions and a bunch of objectives then it probably won’t. You’ll have Too Many Projects, and waste tons of time in meetings where you’ll be either begging for resource or dodging tasks you see as pointless (or possibly both). There may be a committee who get together every month to try and make sense of the chaos, but beware the man-hours spent Power-Pointing in the build-up and hacking together a roadmap in the aftermath. There are also a host of reasons why the PMO should be wary of spreadsheet based prioritization.

We should also highlight that some software out there will claim to ‘do prioritization’ by having a data field where you key in a rank. This is a bit like when my kids claim to ‘do the dishes’ by leaving plates in a 10-foot zone around the dishwasher. It’s better than nothing, but only just.

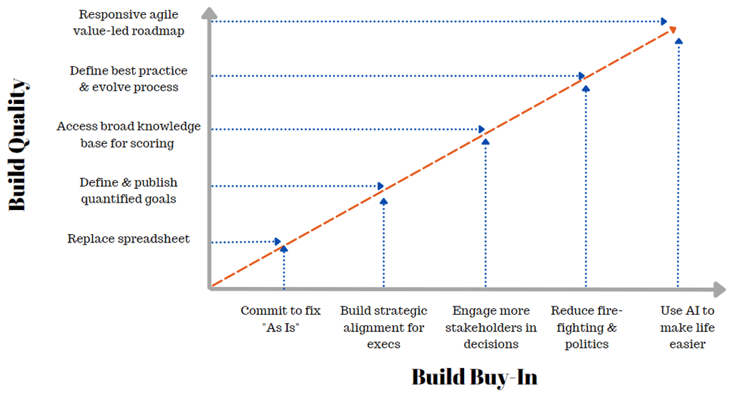

When we think about different solutions for prioritization, there are two criteria we believe matter. Does it deliver a quality decision, and does it build buy-in to that decision.

We chose to use AHP because, as we see it in this chart, AHP is a hands-down winner (ping me if you want to challenge our read on this, we love a debate!)

In short, if you have a purely financial portfolio, a simple product backlog, or an organization that fits into one room then AHP may not be your best choice. Otherwise, read on.

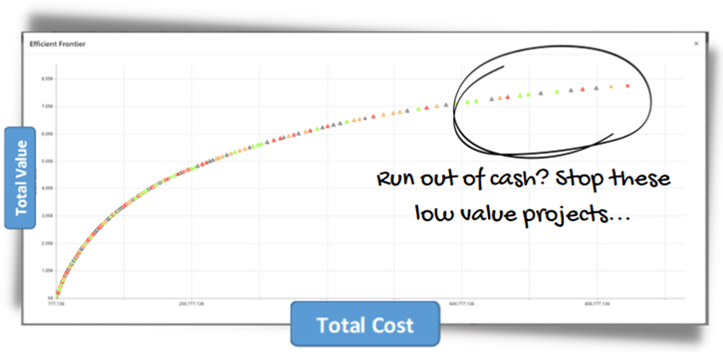

AHP gets you these charts

Every CEO will tell you they are data-led, rationale professional leaders who want to make smart decisions. But if you can’t quantify your projects, then your selection process is not being led by data. Put another way you need to be able to analyze your portfolio like this, or you not doing it properly.

These charts are made possible because AHP quantifies project value in a simple 0-100 model. and we believe they are critical solutions in a PMOs' portfolio management toolkit. You might be able to create them in a spreadsheet, but its probably about as likely as my kids loading the dishwasher on their own accord.

Once you have this core analytics framework you can also overlay additional modelling, such as risk, or a hierarchy view to see distribution of activity by division. The key is that quantification unlocks transparency. We have a whole other blog on that.

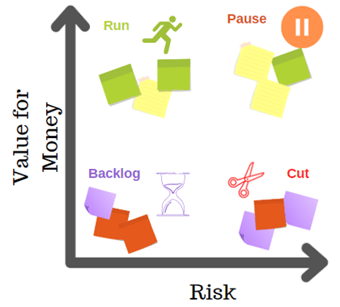

AHP helps manage risk

Dealing with risk is a key challenge in most planning processes. Ignore risk at your peril, but let it dominate and you become too cautious. Every organization is different, the key is that you determine the right approach as part of planning. Here are the main options:

- Make risk a gating factor to fast track ‘must do’ projects.

- Build a risk model using AHP, which scores projects according to what happens if the project is not done, enabling pragmatic judgements around 'grey areas'.

- Detailed quantitative risk modelling is not right for AHP, but if you have this data, you can integrate it into an AHP model, or use it to compare vs. overall project value.

- Score project riskiness as a discount to the value of your project, thus reducing the score where (for example) organizational readiness is low or risk of disruption is high.

Ultimately only hindsight can tell us which risks we should have ignored, and which we should have addressed. However, in the absence of that knowledge we believe AHP enables the next best thing, an intelligent data-led decision, where data visualization enables your leadership to exercise considered judgements.

AHP enables Human Intelligence and Artificial Intelligence to work together

Turning subjective opinion into data points means you can now use algorithms to support your prioritization. This means a complex portfolio, defined by multiple competing constraints can be maximized for value in minutes, with computational optimization designed to ‘fill the backpack’ as effectively as possible.

Similarly, you can you also use the same logic to turn your backlog into a value-led roadmap, designed to schedule your workload based on outcomes, rather than simply settling for the first combination of projects that doesn’t break your delivery teams.

AHP builds velocity in planning

Adding in extra process around defining goals, and producing a pairwise model may seem, in the short term, like adding time into planning, and can raise resistance from some executives who would rather ‘get on with it’. There are five reasons why those impatient folks are wrong:

- The end-to-end delivery for AHP can be less than 8 weeks if there is urgency in deployment. Read more here.

- A well-structured planning process builds muscle memory. Organizations perform best when routines are embedded, and people know what to do. AHP introduces new ways of problem solving that may feel odd for the first thirty minutes but will soon become second nature.

- Up-front planning reduces time spent fixing poor prioritization. If you have a transparent plan which respects capacity, involves stakeholders, analyzes risk, and utilizes subject matter expertise there are far fewer potential triggers for plans to be (painfully / slowly) revisited.

- Once executives complete their pairwise review, they have a policy framework which can be published and left. This is typically an annual task, which enables teams to ideate and select investments aligned to strategy, all without executive micro-management at project level.

- We all know things can change – think pandemic and economic crisis. An AHP model is designed to enable a portfolio to make a fast pivot. Simply re-weight criteria to reflect the new challenges, and your scores are now updated, enabling data-led choices to be made quickly and effectively. Use AI functionality and you've got a new roadmap by lunchtime.

Our clients tell us that AHP works

At TransparentChoice we help clients realize value of AHP-led prioritization. The journey for each client is different, but there are key themes we see time and again.

Less waste. The average portfolio is 20% ‘waste’ – poorly aligned projects that should simply stop. Identifying these pet projects saves big. Listen to Mike on how a US Trade body did just that.

Alignment from working together. “We’re having conversations we should have had years ago”. The CEO of a major Canadian insurance company found that completing their Pairwise review helped his leadership team face into disagreements which has undermined the previous attempts to prioritize.

Stop doing ‘Too Many Projects’. A global law firm’s IT team were suffering from a diverse set of stakeholders battling to shoehorn their projects into the backlog. By imposing an AHP framework people understood what kind of projects were worthwhile, and that a ‘No’ was fair

Transparency between divisions. A global bank had a lot of data, but no clarity on what was being worked on and what it would deliver. Using AHP they were able to quantify the value of a huge multi-divisional portfolio and see where their money was being spent across all teams.

Common Language. A global manufacturer needed to merge investment portfolios to help realize the value of a business merger. Listen to how Plinio used AHP to make this happen 10x faster.

Agile re-prioritization. A major UK Charity needed to reboot their backlog after COVID. Hear how Jodie used AHP to create a new plan that was fast, and data led.

Define Better Best Practice. By putting AHP into the heart of your prioritization you create a framework for delivering a sustainable, fair, effective approach to determining what you work on. Don’t believe us? Listen to Gerry’s story.

Deploy AHP for progressively greater benefits

Prioritizing with AHP is a journey in improving both quality and buy-in for decision making. To fully realize these benefits, it is not predominantly a technical challenge, but a cultural one. We see this happening as an evolution, looking something like this:

If you're reading and nodding then AHP is a solution you need. At TransparentChoice we've spent the last few years putting all this Decision Science-y goodness into a user interface that unlocks all these benefits without having to learn what an Eigenvector does, or why Geometric Averages rock. If you're ready for a quick demo on zoom we're up for it. Or just message me and let's prioritize the heck out of your portfolio!